Table of Links

3.2 Learning Residual Policies from Online Correction

3.3 An Integrated Deployment Framework and 3.4 Implementation Details

4.2 Quantitative Comparison on Four Assembly Tasks

4.3 Effectiveness in Addressing Different Sim-to-Real Gaps (Q4)

4.4 Scalability with Human Effort (Q5) and 4.5 Intriguing Properties and Emergent Behaviors (Q6)

6 Conclusion and Limitations, Acknowledgments, and References

A. Simulation Training Details

B. Real-World Learning Details

C. Experiment Settings and Evaluation Details

D. Additional Experiment Results

2 Preliminaries

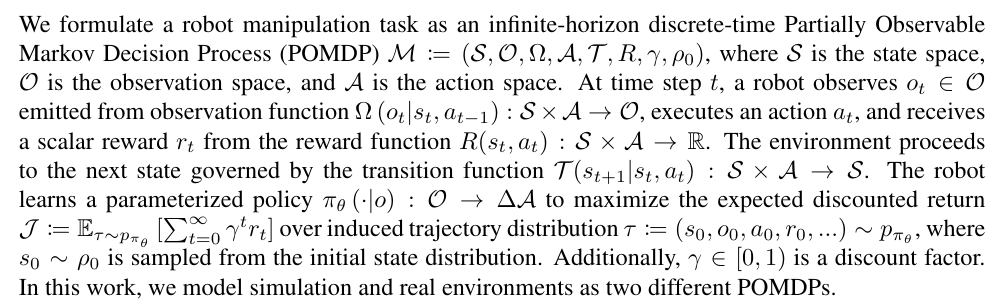

2.1 Problem Formulation

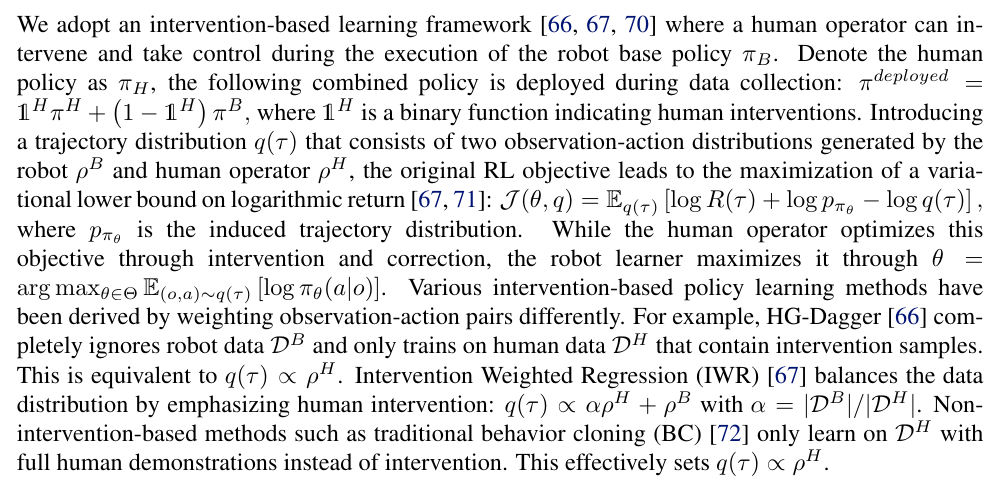

2.2 Intervention-Based Policy Learning

3 TRANSIC: Sim-to-Real Policy Transfer by Learning from Online Correctio

An overview of TRANSIC is shown in Fig. 2. At a high level, after training the base policy in simulation, we deploy it on the real robot while monitored by a human operator. The human interrupts the autonomous execution when necessary and provides online correction through teleoperation. Such intervention and online correction are collected to train a residual policy, after which both base and residual policies are deployed to complete contact-rich manipulation tasks. In this section, we first elaborate on the simulation training phase with several important design choices that reduce sim-to-real gaps before transfer. We then introduce residual policies learned from human intervention and online correction. Subsequently, we present an integrated framework for deploying the base policy alongside the learned residual policy during testing. Finally, we provide implementation details.

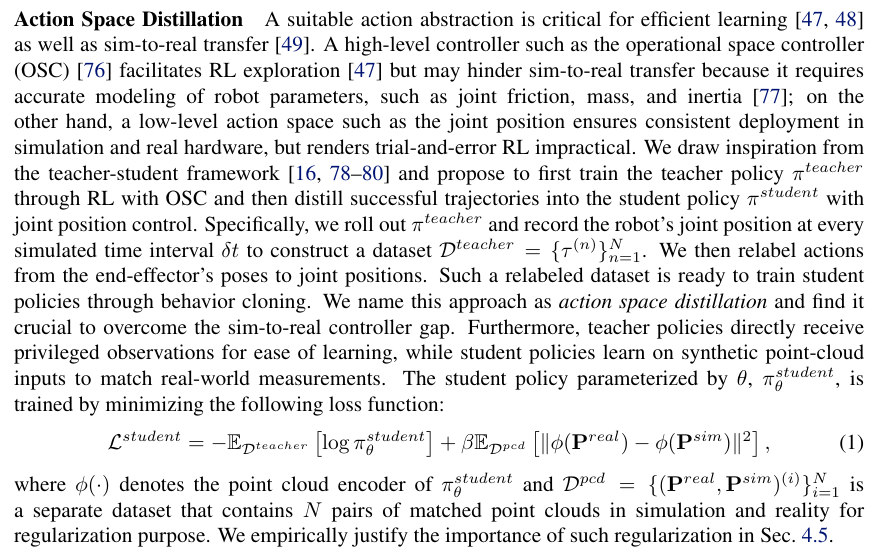

3.1 Learning Base Policies in Simulation with RL

3.2 Learning Residual Policies from Online Correctio

3.3 An Integrated Deployment Framework

3.4 Implementation Details

We use Isaac Gym [10] as the simulation backend. Proximal policy optimization (PPO [84]) is used to train teacher policies from scratch. We design task-specific reward functions and curricula when necessary to facilitate RL training. We apply exhaustive domain randomization during teacher policy training and proper data augmentation during student policy distillation. Student policies are parameterized as Gaussian Mixture Models (GMMs [68]). We have also experimented with other state-of-the-art policy models, such as Diffusion Policy [85], but did not observe better performances. See the Appendix Sec. A for more details about the simulation training phase and additional comparisons. During the human-in-the-loop data collection phase, we use a 3Dconnexion SpaceMouse as the teleoperation interface. Residual policies use state-of-the-art point cloud encoders, such as PointNet [86] and Perceiver [87, 88], and GMM as the action head. We follow the best practices to train residual policies, including using learning rate warm-up and cosine annealing [89]. More training hyperparameters are provided in the Appendix Sec. B.4.

Authors:

(1) Yunfan Jiang, Department of Computer Science;

(2) Chen Wang, Department of Computer Science;

(3) Ruohan Zhang, Department of Computer Science and Institute for Human-Centered AI (HAI);

(4) Jiajun Wu, Department of Computer Science and Institute for Human-Centered AI (HAI);

(5) Li Fei-Fei, Department of Computer Science and Institute for Human-Centered AI (HAI).

This paper is